AI medical scribes are transforming healthcare workflows. They reduce documentation time and improve efficiency, but they also operate in one of the most sensitive data environments in any industry.

Unlike typical AI tools, they process live patient conversations, generate clinical records, and handle protected health information (PHI) at every stage. This is where many teams get it wrong. HIPAA is not a checklist. It is a system design requirement that directly impacts your architecture, data flows, and every component that handles patient data.

In this guide, you will learn how to make an AI medical scribe HIPAA compliant from both a technical and business perspective, and how to build systems that clinics can trust from day one. This guide is for the founders, CTOs, and compliance officers responsible for building and scaling secure, audit-ready clinical AI.

What is an AI Scribe from the HIPAA Perspective?

An AI medical scribe is not just a productivity tool. From a HIPAA perspective, it is a system that continuously processes protected health information (PHI) across an entire clinical workflow.

Unlike traditional software, it actively participates in care by capturing conversations, transforming them into structured data, and generating clinical records.

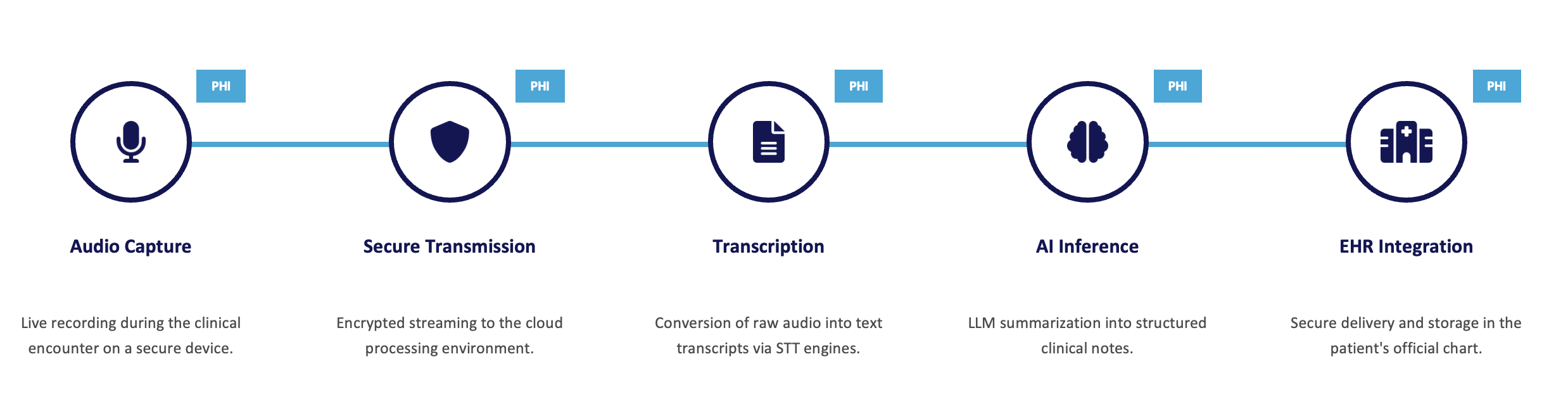

This means PHI is handled at multiple stages, not just stored in one place:

- Audio capture of patient–provider conversations

- Secure transmission to processing systems

- Transcription into text

- AI inference to generate clinical notes

- Integration into EHR systems

As shown in the diagram above, PHI exists at every step of this pipeline. It moves across systems, formats, and environments, increasing both risk and responsibility.

This is the key shift. HIPAA compliance is not about securing a database, it is about securing the entire data flow.

Because of this, AI scribes operate as Business Associates under HIPAA, and must be designed with security, privacy, and compliance built in from the start.

What is Covered in this Guide

This guide provides a structured breakdown of how to approach building a HIPAA compliant AI medical scribe. It covers both system design and operational requirements, including how patient data flows through your platform, where risks appear, and what controls are required to operate securely in a healthcare environment.

| Section | What You Will Learn |

|---|---|

| 1. AI Scribe Fundamentals | Understand how AI medical scribes operate in real clinical workflows and why they continuously process sensitive patient data across multiple system layers. |

| 2. Where PHI Exists in the System | Break down the full data flow from audio capture to EHR integration and identify where protected health information appears at each stage. |

| 3. HIPAA Scope and Obligations | Learn when an AI scribe becomes a Business Associate, what triggers compliance, and the legal responsibilities tied to handling PHI. |

| 4. Common Compliance Mistakes | Identify high-risk assumptions and design errors such as PHI leakage in logs, improper testing data, and misunderstanding cloud responsibility. |

| 5. Secure System Architecture | Design a HIPAA-compliant AI pipeline with encryption, isolation, and controlled data flow across capture, processing, and storage layers. |

| 6. Technical Safeguards | Implement core controls such as encryption, RBAC, MFA, audit logging, and secure session management to protect electronic PHI. |

| 7. Vendor and Cloud Risk | Understand shared responsibility, BAA requirements, and how third-party AI models and cloud providers impact your compliance posture. |

| 8. Breach Risks and Prevention | Explore real-world breach scenarios such as prompt injection, logging leaks, and model memorization, along with mitigation strategies. |

| 9. Compliance Program Setup | Build a complete HIPAA compliance program including risk assessments, policies, workforce training, and continuous monitoring. |

| 10. Business Impact of Compliance | Understand how compliance affects deals, funding, and trust, and why a single incident can impact the survival of a healthcare AI startup. |

1. AI Scribe Fundamentals

AI medical scribes operate directly within clinical workflows, not as background analytics tools. They capture real-time patient–provider interactions and convert them into structured clinical documentation that becomes part of the official medical record.

If the generated documentation is used to make decisions about a patient or becomes part of the official medical record, it may be considered part of a Designated Record Set under HIPAA. This introduces additional obligations, including patient rights to access, amend, and receive copies of their data.

This makes them fundamentally different from typical AI systems. They:

- Process data in real time, not in delayed batches

- Generate primary clinical documentation, not just insights

- Integrate directly with clinical systems such as EHRs

Because of this, they continuously handle protected health information across multiple layers including capture devices, transmission channels, processing environments, and storage systems.

From a HIPAA perspective, this means the system must be treated as an end-to-end PHI processing pipeline, not a single application.

2. Where PHI Exists in the System

In AI scribe systems, protected health information does not exist in a single database, which makes them different from many typical software products that deal with health data. It appears across multiple components and in different forms throughout the data lifecycle.

PHI is present in:

- Audio capture: live patient–provider conversations recorded on devices

- Data in transit: encrypted streams sent to processing environments

- Transcription outputs: text generated from speech-to-text systems

- AI inference data: inputs and outputs used by models to generate summaries

- Final records: structured clinical notes stored in EHR systems

In addition to primary data flows, PHI can also appear in secondary locations such as:

- Application and infrastructure logs

- Temporary files and processing buffers

- Caches and intermediate storage layers

This distribution of data introduces hidden risk. Securing PHI requires visibility and control across all layers where data is created, processed, or stored, not just the final database.

3. HIPAA Scope and Obligations

An AI medical scribe enters HIPAA scope the moment it handles protected health information on behalf of a healthcare provider. At that point, it is classified as a Business Associate and must comply with HIPAA requirements.

This is typically triggered when the system:

- Processes live clinical conversations containing patient identifiers

- Generates documentation that becomes part of the patient’s official record

- Integrates with EHR systems or clinical workflows

- Operates on behalf of a Covered Entity such as a clinic or hospital

A key requirement at this stage is a Business Associate Agreement (BAA). Processing PHI without a signed BAA is not compliant, regardless of technical safeguards. A BAA must be fully executed before any PHI is processed, transmitted, or stored. Retroactive agreements do not resolve prior non-compliant data handling.

Key HIPAA obligations for AI scribes include:

- Implementing administrative, physical, and technical safeguards

- Conducting a Security Risk Analysis (SRA)

- Maintaining audit logs and access controls

- Ensuring breach detection and notification processes

There is no exemption for early-stage products that handle real PHI. However, teams can avoid HIPAA scope during early development by using fully de-identified data (in accordance with HIPAA standards) or synthetic data that contains no patient identifiers.

HIPAA compliance is governed by multiple rules, including the Privacy Rule (which governs how PHI can be used and disclosed), the Security Rule (which defines safeguards for electronic PHI), and the Breach Notification Rule (which requires reporting of certain incidents). A compliant system must address all three.

4. Common Compliance Mistakes

Most compliance failures in AI scribe systems come from incorrect assumptions made early in development. These issues are often architectural, not just operational.

Common high-risk mistakes include:

- Assuming HIPAA applies later

Teams delay compliance until after product development, which leads to costly redesigns and failed clinical deployments.

- Relying on cloud providers for compliance

Cloud platforms operate under a shared responsibility model. You are responsible for configuring security, access, and data protection correctly.

- Using real PHI in testing environments

Development and testing with raw patient data without proper de-identification is a direct violation.

- PHI leakage into logs and support systems

Debug logs, monitoring tools, and support tickets often unintentionally contain patient identifiers.

- Uncontrolled data duplication

PHI can spread into caches, temporary files, and intermediate processing layers without proper lifecycle management.

- Lack of proper de-identification practices

Teams often assume removing obvious identifiers is sufficient. Under HIPAA, data is only considered de-identified if it meets either the Safe Harbor method (removal of 18 specific identifiers) or Expert Determination. Anything less may still be considered PHI and subject to full compliance requirements.

These mistakes are difficult to fix later because they are embedded into system design. The most effective approach is to treat compliance as a core engineering requirement from the start, not as a post-build activity.

5. Secure System Architecture

A HIPAA compliant AI scribe must be designed as a secure data pipeline where every stage that handles PHI is controlled and protected.

The architecture should enforce security across the full lifecycle:

- Capture layer: secure devices and authenticated audio ingestion

- Transmission layer: encrypted data in transit using TLS

- Processing layer: isolated environments for transcription and AI inference

- Storage layer: encrypted databases and object storage

- Delivery layer: secure integration with EHR systems

Key design principles include:

- Data minimization: only process the PHI required for the task

- Environment isolation: separate workloads to prevent data leakage between processes

- Controlled data flow: define and restrict how PHI moves between services

- Retention limits: automatically delete or purge data when no longer needed

- Minimum necessary standard: Ensure that all uses, disclosures, and access to PHI are limited to the minimum amount of information required to accomplish the intended purpose, in accordance with HIPAA requirements.

A common failure is treating security as a layer added later. In practice, architecture decisions made early determine whether the system can meet HIPAA requirements at all.

6. Technical Safeguards

HIPAA requires specific technical controls to protect electronic PHI and ensure that access and activity can be monitored and verified.

Core safeguards include:

- Encryption

Use strong encryption for data at rest and TLS 1.2+ for all data in transit across the system. - Access control (RBAC)

Enforce role-based access so users only access the minimum data required for their role. - Multi-factor authentication (MFA)

Protect all systems handling PHI, including admin consoles and integrations. - Audit logging

Record all access, system activity, and changes involving PHI with tamper-resistant logs. - Session management

Implement secure tokens, automatic timeouts, and protection against unauthorized session reuse.

In addition, safeguards must extend to:

- Inference environments to prevent data leakage between model executions

- APIs and service communication to ensure encrypted and authenticated requests

- Temporary storage and caches to prevent unintended persistence of PHI

- Logging systems to ensure PHI is never written to application logs, monitoring tools, or error tracking systems unless explicitly required and properly secured

These controls are not optional enhancements. They are required to meet the Security Rule and to provide visibility, accountability, and protection across the system.

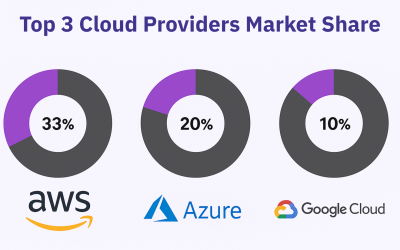

7. Vendor and Cloud Risk

AI scribe systems rely heavily on third-party services, including cloud infrastructure, speech-to-text engines, and AI model providers. Each of these vendors becomes part of your compliance scope.

HIPAA operates under a shared responsibility model:

- The cloud provider secures the underlying infrastructure

- You are responsible for securing data, access, and configurations

Any vendor that handles PHI must:

- Be HIPAA-eligible (for cloud services)

- Sign a Business Associate Agreement (BAA)

- Implement appropriate security and data handling controls

Not all vendors are eligible to handle PHI. Some AI and SaaS providers explicitly prohibit the use of their services for processing PHI in their terms of service. Using such vendors without appropriate agreements can result in immediate non-compliance.

This applies across the entire vendor chain:

- Cloud providers (AWS, Azure, GCP)

- AI/LLM providers used for inference

- Subprocessors handling storage, logging, or analytics

A critical requirement is BAA flow-down. If your system uses subcontractors, each one must also be covered by a BAA. Without this, your compliance posture is incomplete.

Vendor risk is often underestimated. In practice, your system is only as secure as the weakest provider in your architecture.

8. Breach Risks and Prevention

AI scribe platforms introduce unique risks that go beyond traditional applications due to the way they process and generate data.

Common breach scenarios include:

- Prompt injection attacks

Malicious input designed to manipulate the model into exposing sensitive data or bypassing controls.

- Logging leaks

PHI unintentionally captured in application logs, monitoring systems, or debugging tools.

- Improper model training and data handling

Risk that models trained or fine-tuned on PHI without proper controls may expose sensitive data. In practice, more common risks include PHI leakage through logs, storage systems, or misconfigured access controls.

- Unauthorized access

Internal or external access to PHI due to weak access controls or misconfigurations.

Under HIPAA, any impermissible use or disclosure of PHI is presumed to be a breach unless proven otherwise through risk assessment.

To reduce risk:

- Enforce strict input and output controls for AI systems

- Prevent PHI from entering logs, support tools, or analytics systems

- Use de-identified or synthetic data for testing and training

- Continuously monitor and audit system activity

Breach prevention is not a one-time task. It requires ongoing visibility, validation, and control across the entire system.

9. Compliance Program Setup

Building a HIPAA compliant AI scribe requires more than technical controls. It requires a structured compliance program that governs how your organization handles PHI.

Core components include:

- Security Risk Analysis (SRA)

A required, documented assessment to identify vulnerabilities and define remediation actions.

- Policies and procedures

Written documentation covering data handling, access control, incident response, and retention.

- Workforce training

Regular training for anyone with access to PHI, including developers, support, and operations teams.

- Access governance

Ongoing reviews to ensure users only have the minimum necessary access for their role.

- Audit and monitoring

Continuous tracking of system activity, access events, and potential security issues.

HIPAA also requires maintaining evidence of compliance, including logs, risk assessments, and policy documentation for extended periods.

A common mistake is treating compliance as documentation only. In practice, it is an operational system that must be actively maintained and enforced.

10. Business Impact of Compliance

HIPAA compliance is not just a regulatory requirement. It directly impacts your ability to operate, sell, and grow in the healthcare market.

From a business perspective:

- No compliance means no deals

Clinics and health systems will not engage without a signed BAA and proof of safeguards.

- Due diligence is strict

Security reviews, risk assessments, and documentation are required before pilots or contracts.

- Funding is affected

Investors increasingly evaluate security and compliance as part of technical due diligence.

- Trust is critical

A single incident can damage reputation and prevent future partnerships.

Non-compliance introduces serious risk:

- Financial penalties and legal liability

- Loss of customers and contracts

- Long-term damage to brand credibility

In healthcare, security is not optional. It is part of the product. Teams that treat compliance as a core capability move faster in sales, build stronger partnerships, and create systems that can scale safely.

Final Thoughts

Building an AI medical scribe is not just a technical challenge. It is a responsibility to handle some of the most sensitive data that exists.

HIPAA compliance is not something you add later. It shapes how your system is designed, how data flows through it, and how your team operates day to day. Every decision, from architecture to vendor selection, directly impacts your ability to protect patient information and work with healthcare organizations.

The teams that succeed in this space understand one thing clearly.

Compliance is not a blocker. It is an enabler.

It allows you to pass due diligence, win clinical trust, and scale your product in a regulated environment. More importantly, it ensures that the systems you build are safe, reliable, and aligned with how healthcare operates.

If you approach HIPAA as a core part of your product from the beginning, you will not only reduce risk, you will build something that clinics are willing to adopt and rely on.

This content is for educational purposes only and does not constitute legal or compliance advice. Always consult a qualified professional for HIPAA-related decisions.